FaceApp – Is There Anything to Worry About?

FaceApp – just a bit of fun or something more sinister?

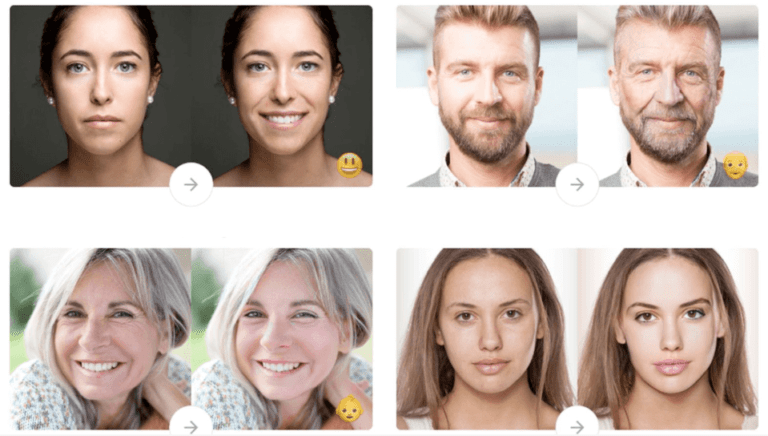

You have probably seen the countless ‘Old Person’ selfies popping up on your social media feeds. Everyone from friends to celebrities have been using FaceApp, the viral AI photo editor app recently. The app manipulates photos to make people look older, younger, as well as a multitude of other hilarious effects. This is not the first time the app has gone viral. However, this time there has been rising skepticism around the way in which it operates. So what are these concerns and should we be worrying?

Посмотреть эту публикацию в Instagram

Growing Concerns

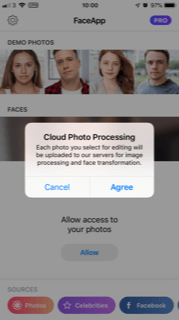

One of the biggest concerns making the rounds on social media is the claim that the app can access and download the full device camera roll in the background, without the user’s knowledge or permission. Concerning privacy, users frequently screenshot sensitive information like passwords, addresses, and banking details. Users worry that external servers are uploading their selected photos. Many people grew uneasy upon learning that the app was a Russian creation. What’s more, the app was sending photos to an unknown cloud location without informing the user.

Users also highlighted that FaceApp allows you to upload photos, even when you haven’t granted permission to photo access. A clause in the Terms and Conditions of the app is also causing concern where it states that:“You grant FaceApp…license to use, reproduce, modify, adapt, publish, translate, create derivative works from, distribute, publicly perform and display your User Content…”.

If you use #FaceApp you are giving them a license to use your photos, your name, your username, and your likeness for any purpose including commercial purposes (like on a billboard or internet ad) — see their Terms: https://t.co/e0sTgzowoN pic.twitter.com/XzYxRdXZ9q

— Elizabeth Potts Weinstein (@ElizabethPW) July 17, 2019

FaceApp’s Response

In response to these concerns, FaceApp have issued a statement stating that while they do in fact process all photos in the cloud, only photos that have been individually selected by the user are edited. The app does not have access to the entire photo library of a device nor is any user data shared with third parties or transferred to Russia, despite being the base of the core app R&D team. They also confirmed that their servers store photos for up to 48 hours. However, the app can remove data from external servers upon request. The company stated Apple allow cloud photo processing through an API introduced in iOS 11. According to Apple, the act of tapping on a photo to select it provides ‘user intent’ (and consent). FaceApp have also added an app disclaimer stating that they will upload any selected images to the cloud for processing.

Authorities Investigate

Following these allegations and the growing media storm around the app, US Senate minority leader Chuck Schumer has called for an investigation by the FBI and the Federal Trade Commission (FTC) into FaceApp. On Twitter, the Senator called it ‘deeply troubling’ that citizens’ personal data could go to a “hostile foreign power”. Meanwhile the UK’s Information Commissioner’s Office (ICO), which recently issued two record fines to corporations Marriott and British Airways for data breaches and violations to the GDPR, have said they are considering these allegations of the misuse of personal data and is monitoring customer concerns. A Watchdog spokesperson advised users “not to provide any personal details until they are clear about how they will be used”.

The takeaway message

So should we worry about FaceApp? Is Russia collecting our personal data to train facial recognition software? According to the app themselves and security researchers, it probably isn’t this extreme. However we should still be cautious of how much access we allow to personal information on our phones. Many apps can access personal data such as camera roll, SMS messages, and social media accounts without our knowledge. While their intent may be innocent, companies can use this data for any number of other reasons. Therefore, when downloading an app, are sure you understand what data it can access. Mobile endpoint protection solutions, like Corrata, are also becoming more and more necessary as the threat of excessive data collection becomes a reality.